- Blog

- Neenah creative collection envelopes

- Logmein hamachi login

- Sunquest pro timer switch

- Wall sconce with on off switch

- Raskin bald

- Update macfuse

- Link you copied

- Percentage of fulltome positions at venture forthe

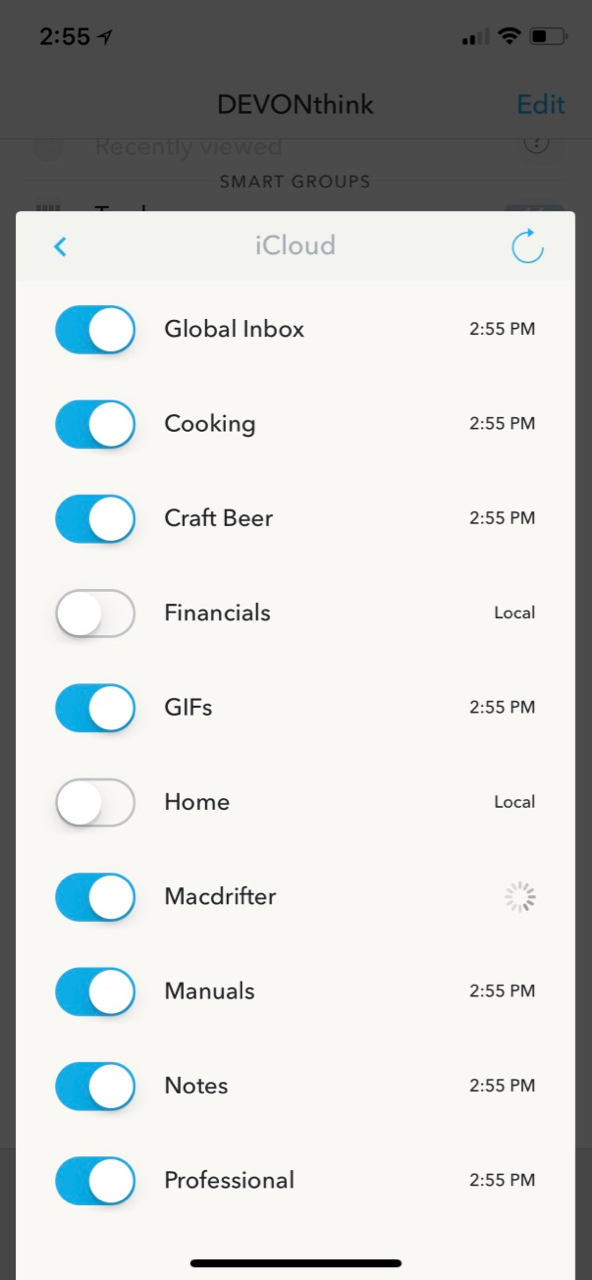

- Devonthink to go 2

- 2440x1440 dota 2

- Boyfriend dungeon characters

- Idle champions of the forgotten realms ahk script

- Softorino youtube converter 2 free trial activation number

- Taxes due date

The simple HTML format contains the information necessary to continue searches, at any time, thus allowing a more diverse and greater number of search results to be retrieved. What's non-obvious is that each file can contain search results from different sources, sort of like the "meta-search engine" idea but more flexible. I also use a system for searching the One query per file. To look at the POST data I might do something like I then read the simple HTML with a text-only browser (links). Http-response add-header url " This allows me to write simple scripts to copy URLs into a simple HTML page. In the loopback-bound forward proxy that handles all HTTP traffic from all applications, I add a line to include the request URL in an HTTP response header, called "url:" in this example. I automate a log of all the HTTP requests the computer makes, which naturally includes all the websites I visit.^1 I am not always using a browser to make HTTP requests, and for recreational web use I use a text-only one exclusively, so a "browser history" is not adequate.

Would dislike going back to living without it.

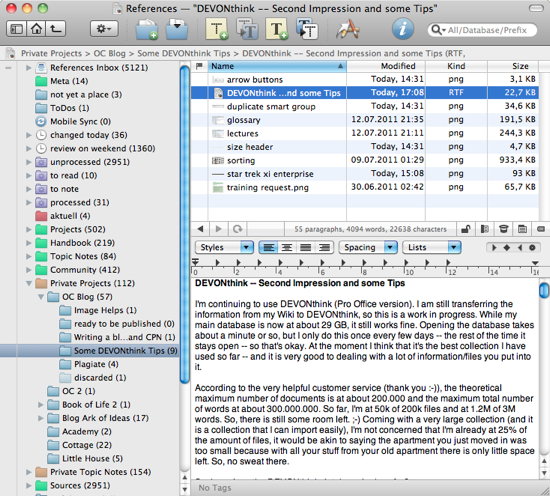

#DEVONTHINK TO GO 2 TRIAL#

Highly recommend anyone with a Mac trying out the free trial of DevonThink, I think it’s like 100 hours of usage. Alternatively some people save everything to zotero instead of DevonThink and then index the folder using DevonThink so it is included in their search. If I want to get proper reference formatting for something, I can open it from DevonThink in the browser and then save it to Zotero. If I want to link between the two I can also use Hook to embed links between the two applications. This allows me to keep Obsidian for just pure notes/writing. On top of this I bookmark all kinds of useful sites and categorise them in folders in their respective databases. I also do the same for useful stack overflow posts so I know I’ll be able to find them if necessary.

#DEVONTHINK TO GO 2 PDF#

Now, every article I read that I find interesting I save as either a pdf or web archive so I can search and find it later. After watching the video I trialled DevonThink and was massively impressed.

I used to try bookmarking things using the built in browser bookmark manager and then later using Raindrop and even copying links into Obsidian but this wasn’t really all that effective. I came across this video by Curtis McHale that completely changed the way I keep track of everything: and last but not least dynamic web pages. The AI part would not trivial in general, and especially because of "content creators" and "SEE optimizers". " to get a log where you can extract your destinations. " is any command you want to run instead of a real login session, i.e. Ssh -D 8080 -l user sleep $Īnd the browser (Firefox for example) is configured to use the proxy at "localhost:8080" which routes its network connection through the tunnel exiting at my server. The tunnel is set up in a terminal window like

I use an ssh tunnel and SOCK5 on top of that so my network cannot be traced by my provider. These ssh tunnels are very flexible things. Your browser can connect to that server, either directly, or via SOCKS5 or even an ssh tunnel if the proxy is running on your (external) server. If you don't mind a bit of tinkering and are a Linux user, you can start with a squid proxy on your server which will keep a log. Browsers track visits in their history, Firefox for example in a file called "places.sqlite" which you could copy and use as a base log.

- Blog

- Neenah creative collection envelopes

- Logmein hamachi login

- Sunquest pro timer switch

- Wall sconce with on off switch

- Raskin bald

- Update macfuse

- Link you copied

- Percentage of fulltome positions at venture forthe

- Devonthink to go 2

- 2440x1440 dota 2

- Boyfriend dungeon characters

- Idle champions of the forgotten realms ahk script

- Softorino youtube converter 2 free trial activation number

- Taxes due date